Comparative studies of multimodal interactions currently suffer from a lack of consistent and standardized methods. To address this limitation, we introduce a unified framework supported by a new method for annotating, processing, and analyzing interaction data, while preserving key features such as interaction units, emitters, overlaps, and silences.

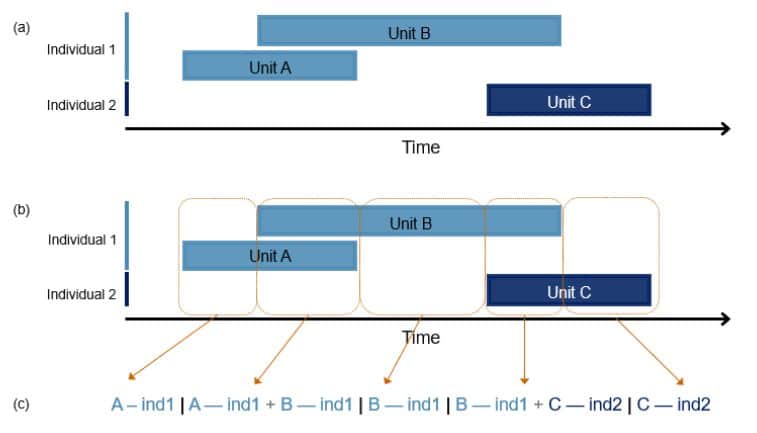

In this transcription of a hypothetical dyadic communicative interaction, the units emitted by individuals 1 and 2 appear along a temporal axis (a). The interaction can be divided into ‘states’ (orange boxes) to reflect any change when it occurs (i.e. a unit disappears or a new one appears; b). The states are then grouped into a single sequence after applying our transcription method, whereby each state corresponds to a cell with each units attached to their emitter (c).

Ultimately, this approach enables robust comparisons of interactions across different species.

2026. Animal Behaviour 234 (April): 123526 — @HAL