The 4th Edition of the ILCB Summer School offers Introductory and Advanced Classes in four core fields of Cognitive Science, reflecting the expertise of the Institute:

- Applied mathematics, statistics and networks;

- Neuroscience and behavior;

- Language and cognition;

- Computer science and machine learning

Keynotes and social events complete this week of immersion.

KEYNOTE: Sophie Scott (UCL, London)

Contact: summer-school@ilcb.fr

Registration until July 11th, 2021, at: https://conferences.cirm-math.fr/2580.html

Fees: – ILCB Members: Free (please, contact the administration for detail of accomodation)

– Non-ILCB Members: contact the cirm : https://www.fr-cirm-math.fr

SYLLABUSES

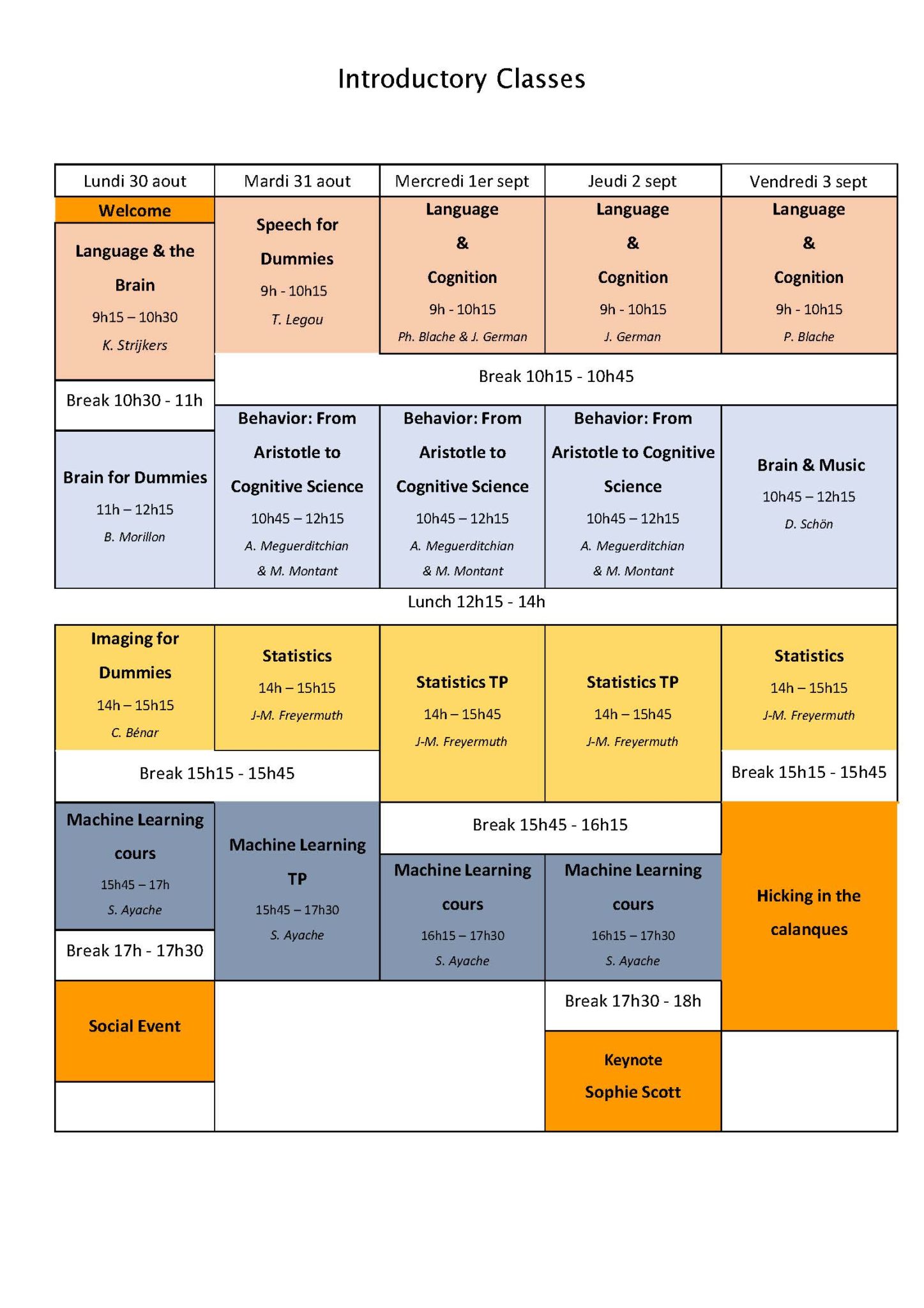

BASIC COURSES

Language & the Brain, Kristof Strijkers

In this course I will give a few key examples of brain language research in order to give you an idea what cognitive neuroscience models of language look like, how they generate questions and predictions, and finally how those predictions can be tested by relying on cognitive paradigms combined with neuroscientific techniques. In this manner, I will address a specific empirical question and brain language model on the topics of semantics, phonology and syntax, respectively.

No pre-requisite.

Speech for Dummies: Thierry Legou

Speaking is a complex activity that implies numerous high level and low level controls. While speech permits to communicate, producing various identified class of sounds, voice convey information about certain characteristics of the locutor itself and about its inner state.

We will first look at the vocal tract, identifying the main actuators/contributors (as well as their physic, mechanic and acoustic properties) that lead to the production of various sounds which, when combined, permit the speech production.

We will talk about vowels and consonants specificities, presenting rapidly the main classical signal processing to study and characterize them.

Online demos will permit to better understand acoustic properties of sounds produced, as well as the signal processing used to study these sounds.

Language & Cognition, James German & Philippe Blache

Language is made of different components at different levels: sounds (or letters), words, groups, sentences, utterances (or texts). It is usually considered that human language relies on this ability to combine low-level units into larger ones in order to build meaning. Understanding how language works consists then in describing these different levels through their corresponding linguistic domains (phonetics, prosody, phonology, morphology, syntax, semantics) and to explain how do they interact. We propose in this course an overview of these domains and present the different theories explaining how meaning can be built from them.

Brain for Dummies, Benjamin Morillon

Teacher: Benjamin Morillon (Institut de Neurosciences des Systèmes; INS) – bnmorillon@gmail.com

This course will provide a general overview of the human brain, mainly through a historical, theoretical, and structural viewpoint.

No pre-requisite.

Behavior: From Aristotle to Cognitive Science, Adrien Meguerditchian & Marie Montant

This course proposes an introduction to human and non-human animal behavior in relation to language studies and the question of the phylogenetic origins of the language faculty. The course is organized in two parts. In the first part, Adrien Meguerditchian will make a brief historical overview of how behavior is assessed over times, from Aristotle to Darwin, then he will describe the concepts and methods proposed by the behaviorist paradigm, the biology of behavior and the contemporary cognitive ethology. During the second part, Marie Montant will address several questions raised by the comparison between human and non-human animals. Then she will describe the relationship between the complexity of behaviors and brain evolution, and how human behaviors are measured in cognitive paradigms.

No prerequisite.

Brain & Music, Daniele Schön

Daniele Schön will give a lecture about the predictive brain : How do we perceive the world surrounding us? What is the role of memory? How many real worlds exist? To what extent our knowledge limits how we study brain functions? I will try to address these and other questions by adopting a musical view of brain functions.

Subjetcs we will talk about : Why Prediction is important, Prediction or reality, Prediction and memory, Prediction and perception, Predictive coding and predictive brain, Mathematical formalization (easy, no worry), Music and predictions, Language and predicitons

No prerequisite.

Imaging for Dummies, Christian-G. Benar

In this course, I will provide an overview of functional brain imaging in humans. Specifically, I will present three modalities: functional MRI, electroencephalography (EEG), magnetoencephalography (MEG), and intracerebral EEG (this latter being performed in patients during presurgical evaluation of epilepsy). For each modality, I will review briefly the underlying mechanisms, the recording technique and the mathematical tools for data analysis.

Statistics, Jean-Marc. Freyermuth

This course introduces the basics of descriptive statistics using the R-programming language. We discuss fundamental notions of descriptive statistics, in particular, the concepts of population, variable, observation, as well as the representation of numerical data as tables and graphics, the measures of centrality and dispersion, and finally Exploratory Data Analysis. Real data examples are mostly chosen from neuroscience and cognitive science experiments. The last lecture focuses on the more advanced topic of network characterization of functional connectivity dynamics.

Prerequisite: basic knowledge of the R software

Machine Learning, Stéphane Ayache

This course aims to provide an overview of problem solving and data modeling from a machine learning perspective. The concepts of data representation, distribution, statistics as well as training, validation and testing will be reviewed, as well as the details of some learning algorithms. Practical work will be performed on a concrete problem with standard tools, which will allow to see the basic concepts of programming in Python.

Prerequisite: a base in mathematics is desirable (algebra, proba-stats), python programming (and numerical computation with numpy). There will be hands-on sessions that require a Python programming environment. Ideally with the Anaconda suite installed on the participants’ computers. If necessary, it will be possible to use online tools such as Google Colab.

ADVANCED COURSES

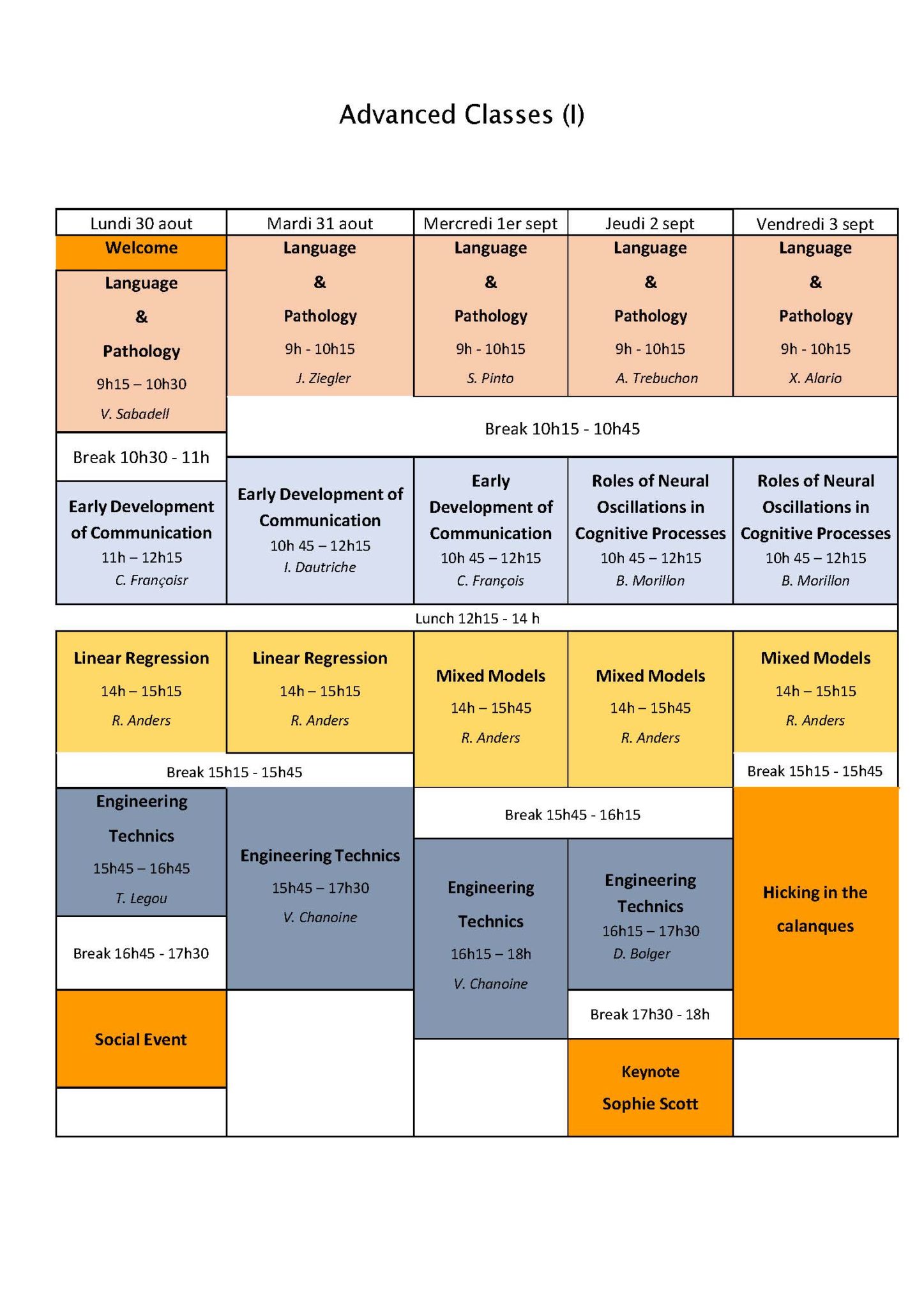

Language & Pathology, Agnes Trébuchon, Serge Pinto, Johannes Ziegler, Véronique Sabadell, Xavier Alario

Aphasia : implications of cognitive science for clinic and rehabilitation : Véronique Sabadell, orthophoniste et

doctorante en sciences cognitive, Institut de Neurosciences des Systèmes et Laboratoire de Pyschologie

cognitive

Aphasia is an alteration of language abilities following a brain lesion. It can have very diverse manifestations. Traditional

classifications have attempted to define distinct syndromic entities, AMONG which Broca’s aphasia and Wernicke’s

aphasia are the most frequently cited. Although widely used, particularly in clinical settings, these classifications are

being challenged by advances in cognitive neuroscience and psychology. Language is now seen as a non-unitary,

widely distributed function, in constant interaction with other cognitive functions such as sensory-motor integration,

memory, and executive functions. I will present the implications of this updated perspective for language rehabilitation

protocols.

“Studying speech motor control from its impairment: a general introduction to dysarthrias” : Serge Pinto, Directeur de recherche au CNRS, Directeur adjoint du Laboratoire Parole et Langage

Motor Speech Disorders refer to a set of signs affecting the control and production of speech consequent to neurological impairment. They are characterized by an approach which dichotomizes motor speech disorders in two modalities: apraxia of speech and dysarthria, which can be distinguished on at least two fundamental points: (1) dysarthria is the consequence of motor dysfunctions also involving the limbs (rigidity, akinesia, ataxia, dystonia, etc.) and of which a specific pathophysiology is determined; dysarthric disorders are constant, predictable, whereas this is not the case for patients suffering from apraxia of speech; (2) verbal dysfluency, marked in apraxic patients, is not characteristic of dysarthric speech. After presenting the classification and pathophysiology of dysarthrias associated with specific movement disorders, I will briefly introduce the relevance of targeting research on dysarthria, and mainly hypokinetic dysarthria in Parkinson’s disease, as a model for a better understanding of the involvement of cortico-basal ganglia-cortical pathways in speech motor control.

Basic notions of motor functional neurophysiology would be helpful, but it is not a pre-requisite for this introductory course.

Learning to read and dyslexia: from theory to intervention: Johannes Ziegler, Aix-Marseille Université and CNRS

How do children learn to read? How do deficits in various components of the reading network affect learning outcomes? How does remediating one or several components change reading performance? In this talk, I will quickly summarize what we know about how children learn to read. I will then present developmentally plausible model of reading acquisition. The model will be used to understand normal and impaired reading development (dyslexia). In particular, I will show that it is possible to simulate individual learning trajectories and intervention outcomes on the basis of three component skills: orthography, phonology, and vocabulary. The work advocates a multi-factorial approach of understanding reading that has practical implications for dyslexia and intervention.

Language pathology and Epilepsy : Agnès Trèbuchon, PU Neurologist and Neurophysiologist at Aix-Marseille

University

In case of drug-résistant epilepsy the surgery procedure consisting in the resection of the “seizure generator” is considered as the treatment of choice. However, this procedure may induce Language deficits, particularly after left temporal surgery. In this context, counseling at the individual level patients about the risks and benefits of surgery can be challenging. The functional exploration of the language network is by consequent crucial.

Through the language deficit during seizure, the language deficit during direct electrical stimulation (SEEG or awake surgery) we will discuss the clinical link between language network and epilepsy and the question of the functional reorganization.

Connecting healthy and pathological language processing, F.-Xavier Alario, Laboratoire de Psychologie

Cognitive, Aix-Marseille Université & CNRS

How different are healthy and pathological language processing? Can the study of patients inform our understanding of

the general population? Can theories describing “canonical” language processing reliably inform clinical decisions?

This segment of the “Language & Pathologie” course will invite an interactive discussion of the links between healthy

and pathological language processing. It will be based on the first four segments of the course, as well as on other

examples. You

will be expected to speak out, not only listen and write.

Prerequisite: Attentive attendance at the four previous segments of the course would be a plus.

Early Development of Communication, Clément François

Clément François se focalisera sur le développement neuroanatomique des réseaux de langage ainsi que sur les phénomènes de neuroplasticité sous-tendant le développement précoce de la perception de la parole avec un focus sur le raffinement phonologique. Pour se faire, il présentera les résultats d’études comportementales et de neuroimagerie menées chez le nourrisson et le tout petit.

The second lecture will concern word learning. Word learning is often considered the simplest and least controversial aspect of language development. Although theorists fiercely debate the ontogenetic and phylogenetic origins of grammar, everyone agrees that words must be learned by observing the contexts in which they are used. No other theory can explain how English-speaking children come to use ‘shoe’ to label footwear, whereas young French speakers use the same sequence of sounds to label cabbage. However, this self-evident truth masks a host of questions about how learning occurs and the knowledge that children bring to the problem. In this class, I will provide an overview of what we know about word learning in the very first years of life.

From a developmental point of view, it is unlikely that intentional communication appears from scratch at the end of the first year (Ramenzoni & Liszkowski, 2016). Presenting her current researches, Marianne Jover will focus on the early development of communicative gesture and adults’ understanding of the infants’ movements (Jover & Scola, 2018, Jover et al., 2019).

No prerequisite.

Roles of neural oscillations in cognitive processes, Benjamin Morillon

Teacher: Benjamin Morillon (Institut de Neurosciences des Systèmes; INS) – bnmorillon@gmail.com

These two courses will introduce the main mechanistic functions for which neural oscillations are believed to play a role in information processing: synchronisation of local and large-scale neural ensembles, inter-areal communication-through-coherence, and segmentation of continuous sensory information (such as speech) into discrete computational units. We will review different cognitive functions for which these mechanisms apply, such as spatial and temporal attention, and most importantly speech, language and communication. In particular, this includes speech parsing, inter-areal communication underlying language processing and inter- individual synchronisation during communication.

Prerequisites: basic notions of neurophysiology: neuron, action potential, membrane potential. Basic notions of signal processing: dimensions of a temporal signal (amplitude, time), an oscillatory signal (phase, frequency…). Spectral decompositions: Fourier or time-frequency.

Linear Regression & Mixed Models, Royce. Anders

This course will provide both the theoretical background and skills to apply regression/mixed models in R/RStudio. Mixed models are some of the most popular analytical approaches in the human sciences, and the R programming language is widely used in academia. Topics include (but are not limited to) loading and assessing the integrity of your data set (missing values, outliers, etc.), distributional analysis and visualisation, mathematical understanding and requirements for an appropriate regression/mixed model, data transformations, model application, model checks and optimization, model selection, and if time permits, generalized linear mixed models such as with the logit family.

No prerequisite but bring your computer and install Rstudio and R.

Engineering Technics: Thierry Legou, Valérie Chanoine, Deirdre Bolger, Christelle Zielinski

Tuesday August 31 15:45-18:00 “CREX: MEG” at CIRM, Luminy, Marseille (Valérie Chanoine)

This course is an introduction to the practice of the MNE-python toolbox, an open-source software for exploring, visualizing, and analysing human neurophysiological data (Magnetoencephalography-MEG, Electroencephalography-EEG, or EEG Intracranial data). Focus will be placed on visualization and analysis of MEG data both at the sensor level (at the periphery of the skull) and at the generator level (sources inside the brain).

Prerequisites: beginner level in python. No prior knowledge of MEG is presumed.

Wednesday September 1 16:15-18:00 “CREX: fMRI” at CIRM, Luminy, Marseille (Valérie Chanoine)

This course introduces you to neuroimaging in functional Magnetic Resonance Imaging (fMRI) though practice using open-source python packages. The aim is to visualize anatomical and functional images of a human brain from a functional MRI experimental protocol and to understand how to initiate a python-pipeline with such input images.

Prerequisites: beginner level in python. No prior knowledge of fMRI is presumed.

The Hitchiker’s Guide to the Galaxy of EEG, Deirdre Bolger, Thierry Legou, Christelle Zielinski

In this class key elements of electroencephalography (EEG) will be introduced via a live demonstration of EEG signal acquisition: the principle of EEG as well as its biophysical origins, its temporal and oscillatory activity and common signal processing approaches applied to reveal these properties. An example of the brain’s response to stimulus characteristics will be presented via a demonstration of a simple neurofeedback paradigm. Participants will also be brought on a brief excursion into the relatively recent world of “Art and Neuroscience” with a more unusual presentation of the EEG signal. This class does not require any prior knowledge of EEG or signal processing.

Pre-requisites: No prior knowledge of EEG or signal processing required

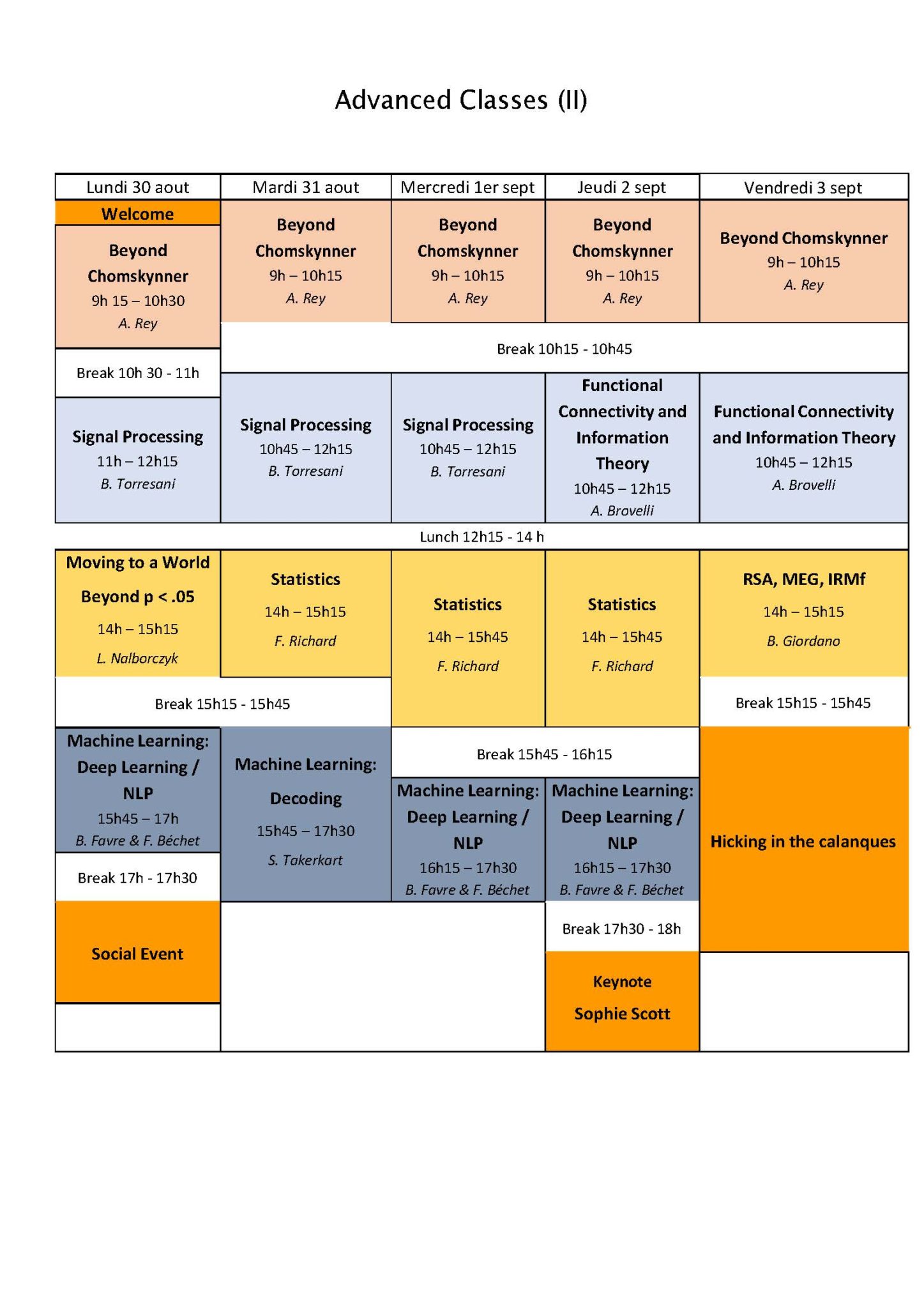

ADVANCED COURSES

Beyond Chomskynner, Arnaud Rey

In 1957, the publication of Syntactic structures by N. Chomsky and Verbal Behavior by B. F. Skinner introduced two radically different approaches to the study of language. After a brief and critical presentation of these approaches, I will pave the way to current approaches based on language use and implicit statistical learning, showing that these approaches have slowly created a favorable climate for a paradigm shift in the study of language processes. I will also argue that the central notion of syntax should certainly be reconsidered or eventually, abandoned.

No prerequisite.

Signal processing : spectral analysis, time-frequency and sparsity, Bruno Torresani

- Lecture 1 : Spectral analysis and time-frequency

The focus in this first lecture will be on signal analysis. We will review reference methods for spectral analysis (Fourier transform, periodogram), and time-frequency analysis (short time Fourier transform and periodogram, Gabor transform,…). If possible we will also briefly discuss iterated filter banks (convolution networks and scattering transform)

- Lecture 2 : Time-frequency and wavelets, synthesis

In the second lecture we will revisit time-frequency methods from the point of view of synthesis rather than analysis. We will describe the basics of frame theory, discrete transforms and the construction of linear systems defined in the time-frequency domain.

- Lecture 3 : Sparse time-frequency representations

The last lecture will be devoted to the concept of sparsity, and will focus on standard algorithms for constructing sparse time-frequency and wavelet representations.

Functional Connectivity and Information Theory, Andréa. Brovelli

I will present an overview of the mathematival and computational tools for the analysis of functional connectivity (FC) among neural signals. I will introduce notions such as non-directed versus directed (e.g., Granger causality) FC, time- versus frequency-domain FC measures. Finally, I will present recent advanced in Information Theory that allow the characterisation of task-related neural interactions.

Prerequisites: basics of probability theory; basics of cognitive functions and brain networks

Moving to a world beyond p < .05, Ladislas, Nalborczyk

Statistics, Frédéric Richard

This course is an introduction to statistical inference which aimed at familiarizing participants with estimation and testing issues. After recalling some useful notions about random variables, we will first see how to estimate and test the mean value (expectation) of samples of random variables with an unknown distribution. During two practical sessions carried out in Python, we will then study in details the case of samples of Bernoulli’s law. Finally, in a last part, we will approach the inference problems in the framework of Gaussian samples.

Prerequisite: basics of probability theory.

RSA, MEG, IRMf, Bruno Giordano

Machine Learning: Deep Learning / NLP, Benoit Favre, Frédéric Bechet

This class will give an overview of the main approaches for modeling language with deep learning, from recurrent neural networks to transformers. Then, it will explore representation learning techniques for natural language processing, their promises, their limits, and why they are ubiquitous tools for language-related applications. A practical tutorial will complement this presentation by letting students implement in pytorch linguistic probes as a tool to better understand BERT representations. Suggested pre-requisites: fundamentals of machine learning and data science, python programming, experience with pytorch. This course is part of a continuity. A first class on Monday afternoon, August 30, followed by two more classes on September 1 and 2.

Prerequisites : Python programming, deep learning, experience with pytorch is a plus.

Decoding the brain using machine learning, Sylvain Takerkart

KEYNOTE LECTURE :

Sophie Scott (UCL, London)

This talk will be followed by a Lunch! Registration is free but mandatory. Please confirm attendance by sending an email to nadera.bureau@blri.fr